How to Humanize AI Text for Academic Writing: The Ultimate Guide

You spent weeks on your literature review. You carefully prompted an AI assistant to help you draft sections of your thesis. The output looked polished, well-structured, and technically accurate. You submitted your paper confident that the content was solid. Then you received the email every researcher dreads: "Your submission has been flagged for potential AI-generated content."

This scenario is no longer hypothetical. It is happening to thousands of graduate students, postdoctoral researchers, and early-career academics every single month. As AI detection technology becomes standard across universities and journals worldwide, the question is no longer whether your AI-assisted text will be scrutinized it is when. If you have ever used AI to help draft any part of an academic paper, this guide is essential reading. The stakes are your degree, your publications, and your professional reputation.

The Problem with AI-Generated Academic Text

When a large language model generates text, it does not write the way a human does. Humans are inconsistent, idiosyncratic, and unpredictable in their prose. We write long sentences followed by short ones. We hesitate, digress, and circle back. We vary our vocabulary in ways that reflect our individual reading history, disciplinary training, and even our mood on a given day.

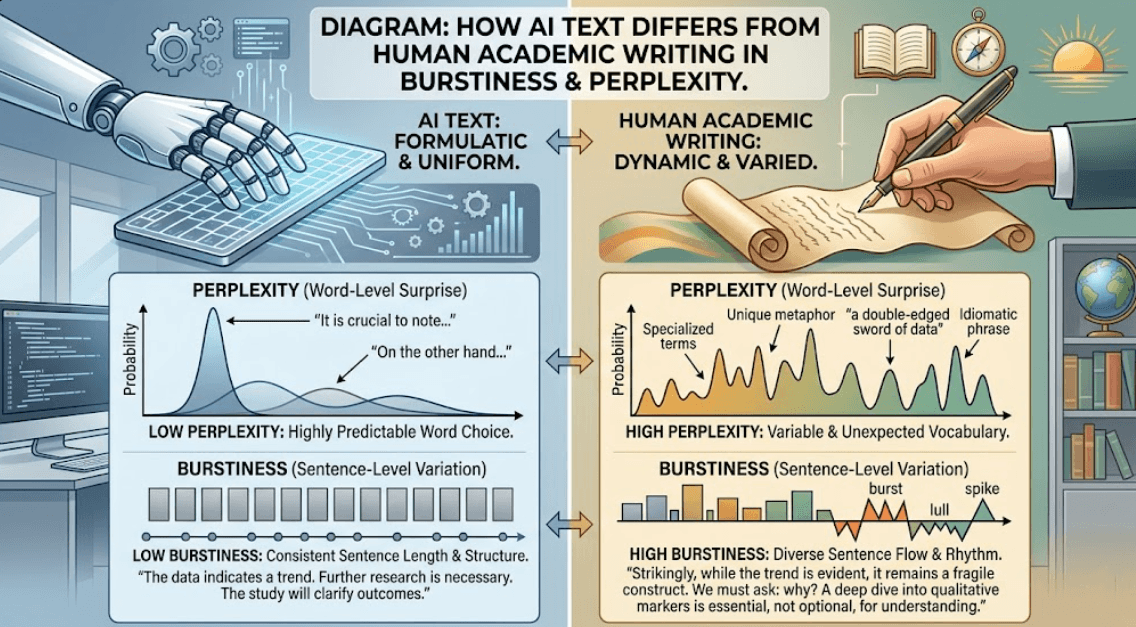

AI-generated text, by contrast, carries measurable statistical signatures. Researchers in computational linguistics have identified properties like burstiness the variation in sentence complexity across a document and perplexity a measure of how predictable the next word in a sequence is. Human writing tends to exhibit high burstiness and variable perplexity. AI-generated text tends to be remarkably uniform across both dimensions.

These are not superficial patterns that you can fix by swapping a few words or rearranging a paragraph. They are deep, structural characteristics embedded in how the text was generated at the token level. Detection algorithms are specifically engineered to measure these statistical fingerprints across sliding windows of text, and they are becoming more sophisticated with every update cycle.

"AI detection is not about catching plagiarists. It is about statistical classification and your perfectly original AI-assisted draft can fail that classification just as easily as copied text fails a plagiarism check."

The critical point is this: AI detection does not care about your intent. It does not matter that you used AI as a drafting assistant rather than a ghostwriter. It does not matter that you verified every claim and edited every paragraph. If the statistical signature of the text matches AI-generation patterns, the detector will flag it. And once flagged, the burden of proving your innocence falls entirely on you.

What Happens When You Get Flagged

The consequences of an AI detection flag are not abstract. They are career-defining events that unfold with devastating speed. Understanding what actually happens when your paper is flagged should be enough to convince any researcher that this is not a risk worth taking.

At the university level, an AI detection flag on a thesis chapter or dissertation draft typically triggers a formal academic integrity investigation. Depending on your institution, this can mean an immediate hold on your degree progress, a hearing before an academic conduct board, and a permanent notation on your academic record. Even if the investigation ultimately clears you, the process itself can take months months during which your research is frozen, your funding may be jeopardized, and your relationship with your advisor is strained beyond repair.

At the journal level, a flagged submission can result in desk rejection without review, blacklisting from future submissions to that journal, and in cases where a paper has already been published formal retraction. A retracted paper is not just a failed publication. It is a permanent, public mark against your name that appears in retraction databases and is visible to every future employer, collaborator, and funding agency that searches for your work.

At the career level, the damage compounds. A single integrity violation can disqualify you from grant applications, poison recommendation letters, and destroy the trust you have built with your research community. In competitive fields where tenure-track positions receive hundreds of applicants, even the rumor of an integrity question can eliminate you from consideration.

"A 2024 survey by the Council of Graduate Schools found that 67% of universities now use AI detection tools on thesis submissions, and 41% have already initiated formal investigations based on AI detection flags."

The psychological toll is equally severe. Researchers who have been falsely flagged describe months of anxiety, imposter syndrome, and a paralyzing fear of writing that can derail an entire research program. The emotional cost of fighting a false accusation assembling drafts, version histories, writing logs, and character references to prove your innocence is something no researcher should have to endure.

Why Manual Humanization Is a Losing Battle

When researchers first learn about AI detection, their natural instinct is to try to humanize AI text manually. They go through the AI-generated draft sentence by sentence, rewording phrases, breaking up paragraphs, and adding personal touches. On the surface, this seems like a reasonable approach. In practice, it is a losing battle that wastes enormous amounts of time and often makes the problem worse.

Here is why manual humanization fails: the statistical patterns that detection algorithms measure operate at a level of complexity that is invisible to the human eye. You cannot perceive burstiness distributions or perplexity curves by reading your own text. You might change fifty words in a paragraph and still leave the underlying statistical signature completely intact because the signature is not about individual word choices but about the mathematical relationships between sequences of tokens across the entire document.

Worse, detection algorithms are not static. Turnitin, GPTZero, Originality.ai, and other major detection platforms update their models regularly sometimes weekly. A manual humanization strategy that seemed to work last month can fail completely against the next model update. You would need to continuously test your text against every major detector, adjust your approach, re-test, and repeat a process that could consume more time than writing the paper from scratch.

There is also the paradox of over-editing. When researchers manually edit AI text extensively, they often introduce new detectable patterns repetitive restructuring approaches, consistent substitution strategies, or uniform sentence-length modifications that create their own statistical fingerprint. Detection algorithms are increasingly trained to recognize these post-generation editing patterns as well.

The fundamental problem is asymmetry: you are one person with limited time, trying to outsmart a detection system that is backed by teams of machine learning engineers, trained on billions of text samples, and updated on a continuous cycle. This is not a fair fight, and it is not one you can win through manual effort alone.

Why Generic Rewriting Tools Make It Worse

If manual humanization is impractical, many researchers turn to generic paraphrasing tools Quillbot, Wordtune, Spinbot, and similar services. These tools promise to rewrite your text in a "more human" way. For casual content like blog posts or social media copy, they may be adequate. For academic writing, they are not just inadequate they are actively dangerous.

The first problem is detection. Generic paraphrasing tools have their own statistical signatures. They tend to apply uniform transformations synonym substitution, passive-to-active voice conversion, sentence splitting in predictable patterns. Detection algorithms have been trained on the output of these tools specifically. Running your text through Quillbot does not make it look human-written; it makes it look like AI text that was run through Quillbot, which is arguably worse because it suggests deliberate intent to deceive.

The second problem is technical destruction. Academic writing is not regular prose. It contains precise terminology, mathematical notation, citation keys, LaTeX formatting commands, abbreviations, and discipline-specific conventions that generic tools do not understand. A paraphrasing tool might replace "p < 0.05" with "p was less than 0.05" or rewrite "CRISPR-Cas9 ribonucleoprotein complex" as "CRISPR protein combination." It might mangle your BibTeX citation keys, corrupt your equation references, or strip your formatting entirely.

The third problem is structural ignorance. A research paper is not a uniform block of text. The introduction has a different rhetorical function than the methods section, which is different from the results, which is different from the discussion. Each section has its own conventions for tone, tense, level of detail, and citation density. Generic paraphrasing tools treat every section identically, destroying the structural coherence that reviewers and readers expect.

The fourth problem is accountability. When a generic tool corrupts a technical term or misrepresents a statistical finding, you may not catch the error until after submission or worse, until a reviewer catches it. At that point, you are not just dealing with an AI detection flag; you are dealing with an accuracy concern that undermines the credibility of your entire paper.

The Smarter Solution: ThesisHuman

ThesisHuman was built from the ground up to solve this exact problem not as a generic paraphrasing tool, but as a specialized academic text humanization engine designed specifically for researchers, graduate students, and academic professionals.

At its core, ThesisHuman uses a proprietary burstiness engine that restructures AI-generated text to align with the statistical properties of authentic human academic writing. Unlike generic tools that apply surface-level word substitutions, ThesisHuman's engine works at the deep structural level where detection algorithms actually operate. The result is text that naturally meets the benchmarks used by Turnitin, GPTZero, Originality.ai, and other major detection platforms.

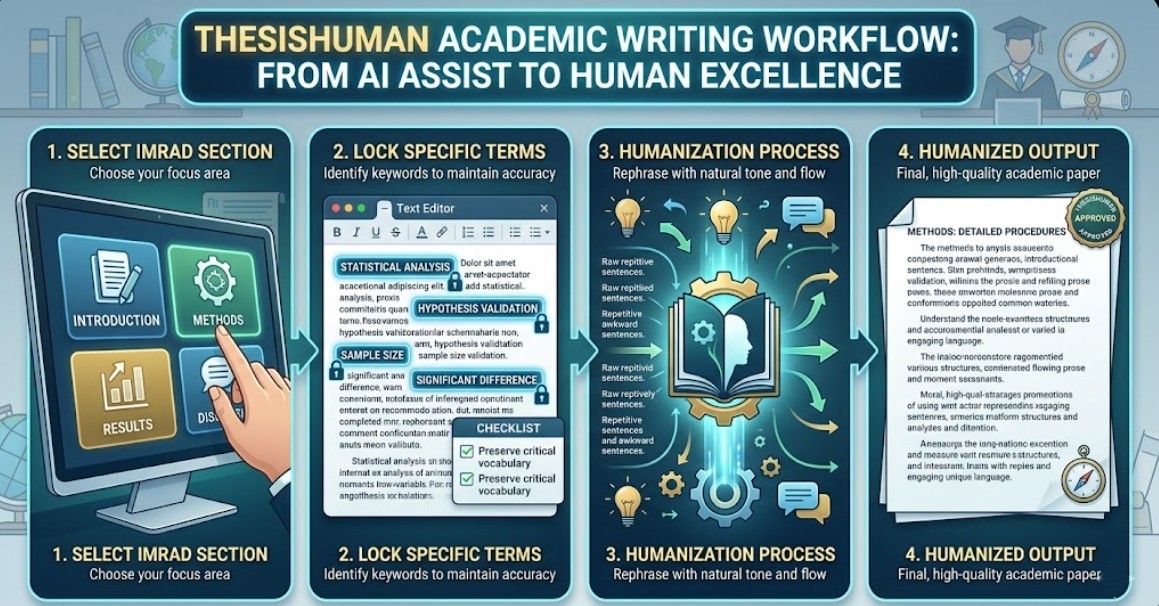

What makes ThesisHuman uniquely suited for academic work is its understanding of scholarly conventions:

- Term Lock technology ensures that your precise technical terminology, chemical formulas, gene names, statistical notations, and discipline-specific vocabulary are preserved exactly as written never paraphrased, never corrupted, never replaced with generic alternatives.

- IMRAD-aware processing recognizes the distinct rhetorical structure of academic papers Introduction, Methods, Results, and Discussion and applies appropriate humanization strategies to each section independently, maintaining the structural coherence that reviewers expect.

- Citation and formatting preservation keeps your BibTeX keys, LaTeX commands, equation references, figure callouts, and other technical formatting completely intact through the humanization process.

- One-click processing means you can humanize an entire thesis chapter in seconds rather than spending days on manual editing that may not even work.

ThesisHuman's engine is continuously updated to stay ahead of evolving detection algorithms. When Turnitin releases a new model version, ThesisHuman's team recalibrates its engine to ensure that processed text continues to meet human-writing benchmarks. This is not a one-time tool it is a continuously maintained defense for your academic career.

The platform is trusted by thousands of researchers across disciplines from computational biology and materials science to social psychology and economics. Whether you are writing a master's thesis, a doctoral dissertation, or a journal article, ThesisHuman provides the confidence that your AI-assisted work will meet the standards expected by your institution and your field.

Conclusion

The era of undetected AI-assisted writing is over. Detection technology is here, it is mandatory at most institutions, and it is only getting more sophisticated. The question is not whether you should humanize AI text before submission it is how you do it without destroying your technical content, wasting days of work, or inadvertently making the problem worse.

Manual humanization is a time sink that cannot keep pace with detector updates. Generic paraphrasing tools corrupt academic content and introduce their own detectable signatures. The only reliable approach is a purpose-built solution that understands both the statistical properties of human writing and the unique demands of academic prose.

Your research took months or years to complete. Your career depends on how it is received. Do not leave your academic future to chance. Try ThesisHuman today and submit every paper with confidence.