Avoiding False Positives: How to Pass Turnitin Originality Checks Ethically

Imagine this: you have spent fourteen months on your doctoral dissertation. Every sentence is yours. Every argument was forged through late nights, stacks of journal articles, and countless conversations with your advisor. You wrote every word yourself no AI, no shortcuts. You submit your final chapter, and three days later, your advisor forwards you an email from the academic integrity office. Turnitin has flagged 38% of your chapter as "likely AI-generated."

You did nothing wrong. But now you have to prove it. And proving a negative proving that you did not use AI is one of the most difficult, stressful, and career-threatening situations a researcher can face. Welcome to the false positive crisis, and it is affecting far more academics than most people realize.

Whether you used AI as a drafting assistant or wrote entirely by hand, understanding how to pass Turnitin originality checks is no longer optional. It is a survival skill for every academic working in 2026.

The False Positive Crisis in Academia

False positives from AI detection tools are not rare edge cases. They are a systemic problem that is growing more severe as institutions adopt detection mandates without fully understanding the limitations of the technology they are deploying.

Consider the documented cases: a graduate student at a major UK university had their thesis defense postponed for six months after Turnitin flagged multiple chapters chapters that had been written, reviewed, and edited over a two-year period with no AI involvement whatsoever. A postdoctoral researcher in the United States had a journal acceptance reversed after the publisher's AI screening tool flagged sections of their manuscript. An international student writing in English as a second language was accused of using AI because their writing exhibited patterns that the detection algorithm associated with machine generation patterns that were actually characteristic of L2 academic English.

"Studies have shown that AI detection tools produce false positive rates between 1% and 15%, depending on the tool and the type of text. In a university processing 10,000 thesis submissions per year, that means hundreds of innocent students could be wrongly flagged."

The psychological impact on falsely accused researchers is devastating. They describe feelings of betrayal, helplessness, and profound anxiety that persist long after the investigation is resolved. Many report difficulty writing at all for months afterward a cruel irony when their entire career depends on producing written work. Some change research directions or even leave academia entirely, unwilling to subject themselves to the constant threat of algorithmic accusation.

The institutional dynamics make the problem worse. When an AI detection flag is raised, the presumption of innocence does not apply in the way it would in a legal proceeding. The burden of proof typically falls on the student or researcher to demonstrate that they did not use AI. How do you prove you wrote something yourself? Version histories can be fabricated. Writing logs can be incomplete. Character references from advisors carry weight, but they are not proof. The entire system is weighted against the accused.

Even when a researcher is ultimately cleared, the damage is done. The investigation is on their record. Their relationship with their advisor may be permanently altered. The weeks or months lost to the investigation cannot be recovered. And the emotional scars the feeling of being treated as a cheater when you did nothing wrong do not heal easily.

How Turnitin's AI Detection Actually Works

To understand why false positives occur and why they are so difficult to prevent through manual effort it helps to understand, at a high level, how Turnitin's AI detection system operates.

Turnitin's AI detection module does not work the same way as its plagiarism detection system. Plagiarism detection compares your text against a database of existing documents looking for matches. AI detection is fundamentally different: it analyzes the statistical properties of your text itself, regardless of whether the content matches anything in any database.

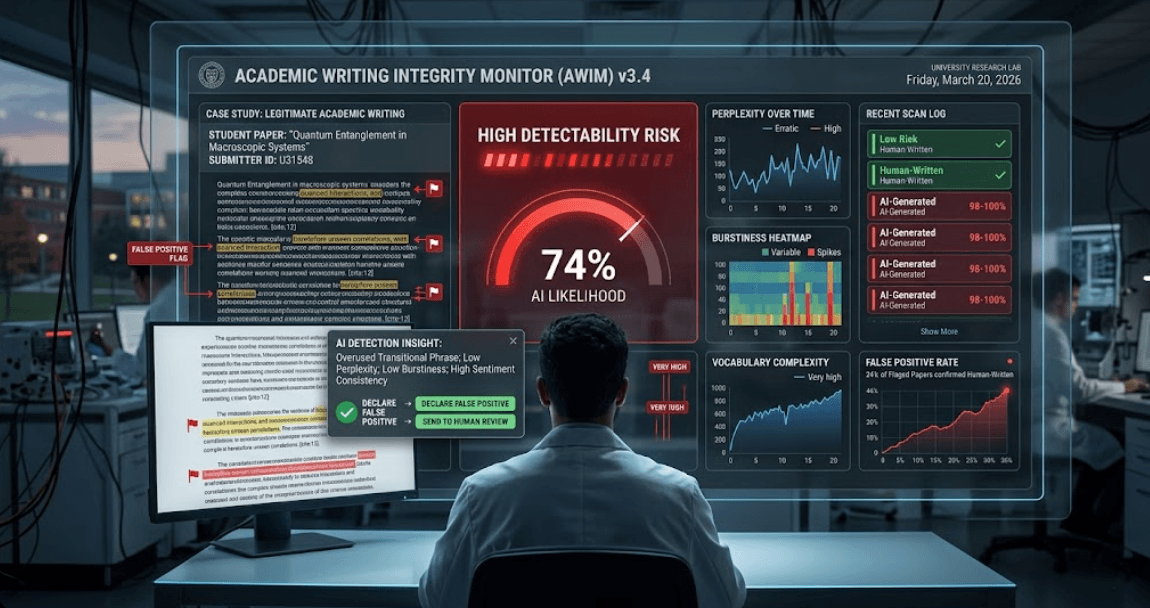

The system processes your text through sliding windows overlapping segments of text that are each analyzed independently. For each window, the algorithm evaluates a set of statistical features that correlate with machine-generated versus human-generated text. These features relate to the predictability of word sequences, the distribution of sentence structures, the variation in complexity across passages, and other mathematical properties of the text.

The scores from each window are then aggregated to produce an overall probability estimate: the likelihood that the text was generated by an AI system. Turnitin reports this as a percentage, and institutions typically set their own thresholds for what triggers an investigation commonly 20% or above, though some institutions flag anything above 10%.

The critical insight is that this is a probabilistic classification system, not a deterministic one. It is estimating likelihood based on statistical patterns, and statistical classification always involves error. Some human-written text will have statistical properties that resemble AI-generated text, just as some AI-generated text might have properties that resemble human writing. The system cannot know with certainty how a text was produced it can only make a statistical judgment.

"Turnitin's own documentation acknowledges that their AI detection system is designed to be used as one piece of evidence in a larger investigation not as a standalone determination of AI use. Yet many institutions treat a high score as proof."

This is why certain types of writing are more vulnerable to AI detector false positives than others. Highly formulaic academic writing the kind that follows strict disciplinary conventions, uses standardized terminology, and adheres to rigid structural templates can register as "AI-like" precisely because it is predictable and uniform. Technical writing in STEM fields, legal analysis, and systematic reviews are particularly susceptible because their conventions naturally produce the kind of statistical regularity that detectors associate with machine generation.

Non-native English speakers are also disproportionately affected. Writing in a second language often involves more careful, deliberate word selection and more uniform sentence structures characteristics that overlap with the statistical signature of AI-generated text. This creates a deeply problematic equity issue that the academic community is only beginning to reckon with.

Why DIY Humanization Is a Dangerous Gamble

Faced with the threat of false positives, many researchers attempt to manually adjust their writing to reduce the likelihood of being flagged. Whether they used AI assistance or not, the logic is the same: if the detector measures certain statistical properties, maybe I can change my writing to avoid triggering them.

This approach fails for several fundamental reasons, and understanding why it fails is crucial for making an informed decision about how to protect yourself.

First, you cannot perceive what detectors measure. The statistical features that drive AI detection operate at a mathematical level that is invisible to human perception. You cannot feel the perplexity distribution of your text. You cannot see the burstiness profile of your paragraphs. Trying to manually adjust these properties is like trying to tune a radio frequency by shouting at it you are working in the wrong domain entirely.

Second, detectors evolve faster than you can adapt. Turnitin and other detection platforms update their models on a regular cycle, incorporating new training data and refining their classification boundaries. A manual strategy that reduces your score in March might be completely ineffective by April. You would need to continuously test, adjust, and re-test an exhausting cycle that diverts time and energy from your actual research.

Third, manual editing can backfire. When you systematically edit text to avoid detection, you often introduce new patterns that are themselves detectable. Consistent restructuring approaches, uniform complexity adjustments, or repetitive substitution strategies create a secondary statistical signature that sophisticated detectors can identify. You might lower your AI probability score only to raise a different flag.

Fourth, the time cost is prohibitive. Researchers who attempt manual humanization report spending 10 to 20 hours per paper time that could be spent on data analysis, literature review, or drafting new sections. For a doctoral student working on a multi-chapter dissertation, the cumulative time investment can exceed hundreds of hours. This is not a sustainable approach, and it represents an enormous opportunity cost at the most critical stage of your academic career.

Fifth, you might make your content worse. In the process of trying to "humanize" your text, you risk introducing errors, weakening your arguments, obscuring your technical precision, and degrading the overall quality of your writing. The cure becomes worse than the disease you end up with a paper that passes detection but fails peer review because the prose has been mangled beyond recognition.

How ThesisHuman Protects Your Academic Career

ThesisHuman exists because the problem of AI detection in academia requires a specialized, continuously maintained, and technically sophisticated solution not a generic paraphrasing tool and certainly not a manual editing strategy.

ThesisHuman's proprietary burstiness engine is specifically calibrated to produce text that aligns with the statistical properties of authentic human academic writing. Rather than applying surface-level word substitutions that detectors can easily identify, the engine restructures text at the deep level where detection algorithms actually operate adjusting the mathematical properties that determine how a passage is classified.

What sets ThesisHuman apart from every other tool on the market is its understanding of academic writing as a specialized domain:

- Term Lock ensures that your technical vocabulary, chemical nomenclature, gene names, statistical notations, and discipline-specific terms are never altered. Your precision is preserved exactly as you intended it.

- IMRAD-aware processing treats each section of your paper according to its rhetorical function. The introduction is processed differently from the methods, which is processed differently from the results and discussion. This maintains the structural integrity that reviewers expect.

- Continuous calibration means the engine is regularly updated to account for changes in detection algorithms. When Turnitin or GPTZero releases a new model, ThesisHuman's team responds with recalibration to ensure ongoing effectiveness.

- One-click processing replaces hours of manual editing with a process that takes seconds. Upload your text, click process, and receive output that naturally meets human-writing benchmarks.

ThesisHuman is not about deception. It is about ensuring that your legitimate academic work is evaluated on its intellectual merit, not penalized by a statistical classifier that cannot distinguish between AI-assisted drafting and deliberate misconduct. It is about protecting yourself from a system that is imperfect, evolving, and capable of causing real harm to innocent researchers.

Thousands of researchers across every major discipline trust ThesisHuman to protect their work. From PhD candidates submitting their first dissertation chapter to established professors preparing journal manuscripts, the platform provides the confidence that your submission will be judged on what matters: the quality of your research and the strength of your arguments.

Conclusion

The false positive crisis is real, it is growing, and it is affecting researchers at every career stage and in every discipline. Whether you used AI as a writing assistant or wrote every word yourself, the threat of being wrongly flagged is something you cannot afford to ignore.

Manual humanization is a dangerous gamble too slow, too unreliable, and too likely to backfire. Generic paraphrasing tools are worse than useless for academic text. The only responsible approach is to use a specialized tool that understands both the detection landscape and the unique demands of scholarly writing.

AI detector false positives have already derailed careers, delayed degrees, and caused immeasurable stress for innocent researchers. Do not become the next cautionary tale.

Protect your research. Protect your reputation. Protect your future. Try ThesisHuman today and pass Turnitin originality checks with confidence.