Why Generic AI Fails at Academic Writing (And What to Use Instead)

It starts innocently enough. You have a thesis chapter due in two weeks. You have mountains of notes, a rough outline, and a growing sense of panic. You open ChatGPT, paste in your outline, and ask it to draft your literature review. Within minutes, you have three polished paragraphs that read beautifully. The prose is fluent. The transitions are smooth. The structure is clean. You feel a wave of relief.

Then you look more closely. Your carefully chosen term "allosteric modulation" has been replaced with "regulatory adjustment." Your APA-formatted citations have been transformed into vague attributions like "according to recent studies." The distinction between your Introduction and Discussion has evaporated both sections read in the same voice, the same tone, the same generic register. And when you run the output through a detection tool, it lights up: 94% AI-generated.

This is the ChatGPT trap, and it catches thousands of researchers every year. Generic AI tools are astonishingly good at producing fluent, readable text. They are also astonishingly bad at producing text that meets the standards of academic writing and astonishingly dangerous when it comes to detection. Understanding why generic AI fails at academic writing is the first step toward protecting your research and your career.

The ChatGPT Trap: Why Researchers Get Burned

The seduction of generic AI tools like ChatGPT, Claude, and Gemini for academic writing is easy to understand. They are free or cheap, instantly accessible, and capable of producing grammatically perfect prose on virtually any topic. For a stressed researcher facing a deadline, they seem like a miracle.

But generic AI tools were not designed for academic writing. They were designed to be helpful, harmless, and honest across a vast range of tasks from writing birthday poems to explaining quantum mechanics to generating marketing copy. Their training data is a broad cross-section of the internet, and their optimization targets prioritize fluency, helpfulness, and engagement over the specific demands of scholarly prose.

This means that when you ask a generic AI to help with your thesis, it applies the same text-generation approach it uses for everything else. It does not know that your Methods section requires past tense and passive voice. It does not understand that "cell viability" and "cell survival" are not interchangeable in your specific experimental context. It does not recognize that your Discussion section should contain hedged interpretations while your Results section should contain direct observations. It treats your research paper as a block of generic text, and the output reflects that.

"Generic AI is optimized for fluency and helpfulness. Academic writing demands precision, convention awareness, and disciplinary specificity. These are fundamentally different objectives, and no amount of clever prompting bridges the gap."

The worst part is that the output looks good at first glance. It is well-structured, grammatically correct, and reads smoothly. This superficial polish hides the deeper problems problems that reviewers, advisors, and detection algorithms will catch even if you do not.

Five Ways Generic AI Destroys Your Research Paper

The failures of generic AI in academic writing are not random. They fall into five predictable categories, each of which can independently derail your paper. Together, they represent a comprehensive case against using generic tools for any aspect of your academic writing workflow.

1. No IMRAD Awareness

The IMRAD structure Introduction, Methods, Results, and Discussion is not an arbitrary convention. It reflects the logical flow of scientific reasoning, and each section has distinct requirements for tone, tense, level of detail, and rhetorical function. An Introduction presents the problem and situates it in the literature. Methods describe what was done, typically in past tense and with enough detail for replication. Results report findings directly. Discussion interprets findings, acknowledges limitations, and suggests implications.

Generic AI tools treat every section the same way. They apply the same voice, the same level of hedging, the same sentence structures, and the same rhetorical strategies regardless of which section they are generating. The result is a paper that feels monolithic a single, uniform block of text that fails to respect the structural expectations of the format. Experienced reviewers can detect this immediately, even without AI detection tools. It reads like someone who has never written a research paper describing what a research paper should contain.

2. Terminology Destruction

Precision of terminology is the foundation of academic writing. In every discipline, specific terms carry specific meanings that have been refined through decades of scholarly discourse. "Reliability" does not mean the same thing in psychometrics as it does in everyday English. "Significant" in a statistical context is not interchangeable with "important." "Expression" in molecular biology is not a synonym for "production."

Generic AI tools routinely replace precise technical terms with generic alternatives. They might rewrite "Western blot analysis revealed upregulation of p53" as "testing showed increased levels of the protein." They might replace "Granger causality" with "predictive relationship" or transform "Cronbach's alpha" into "internal consistency measure." Each substitution may seem minor in isolation, but collectively they strip your paper of its technical credibility and signal to any domain expert that the author does not have a confident command of the terminology.

In fields where terminology precision is critical medicine, chemistry, law, engineering a single wrong term can change the meaning of a statement entirely. A paper that describes a drug as having "reduced effectiveness" when the data showed "reduced bioavailability" is not just imprecise; it is wrong in ways that could have real-world consequences.

3. Citation Corruption

Academic papers are built on a foundation of citations. Every claim, every contextualization, every comparison to prior work is anchored by references to specific published sources. The citation system whether APA, MLA, Chicago, Vancouver, or discipline-specific variants is not a superficial formatting requirement. It is the mechanism by which your paper connects to the broader scholarly conversation.

Generic AI tools are notoriously unreliable with citations. They fabricate references that do not exist, attribute findings to wrong authors, merge details from multiple papers into a single fictitious citation, and mangle formatting in ways that break bibliographic software. If you provide BibTeX keys or reference numbers, generic tools often strip them, reformat them, or replace them with narrative attributions like "as shown in previous research."

The consequences of citation corruption extend beyond formatting. A fabricated reference is academic fraud, full stop. If a reviewer or reader follows a citation and discovers that the source does not exist, your credibility as a researcher is destroyed. And in an era of increasing scrutiny around AI use, citation fabrication is one of the most easily verifiable and damaging errors a paper can contain.

4. Flat Statistical Signature

This is the failure that connects directly to detection. When a generic AI generates text, it produces output with remarkably uniform statistical properties. Sentence lengths cluster around a narrow range. Word choice follows highly predictable patterns. The variation in complexity across paragraphs is minimal. This uniformity is a natural consequence of how language models generate text token by token, always selecting from a probability distribution that tends toward the most likely next word.

Human writing, by contrast, is messy. Humans write a 40-word sentence followed by a 6-word sentence. They use an obscure technical term in one paragraph and a colloquial expression in the next. They write a dense, complex argument and then pause for a simple declarative summary. This irregularity this burstiness is the statistical hallmark of human authorship, and it is precisely what AI-generated text lacks.

Detection algorithms are specifically engineered to measure this uniformity. A paper generated by ChatGPT or any other generic LLM will produce a statistical profile that screams "machine-generated" to these algorithms, regardless of how good the content is. And because the uniformity is a property of the text's deep structure, not its surface vocabulary, no amount of manual word-swapping will fix it.

5. Wrong Tone and Register

Academic writing exists on a spectrum of formality, hedging, and assertiveness that varies not just between disciplines but between sections of the same paper. An Abstract is compressed and declarative. An Introduction is contextualizing and argumentative. A Methods section is precise and procedural. A Discussion is interpretive and cautious. A Conclusion is synthetic and forward-looking.

Generic AI tools cannot reliably produce these distinctions. They tend to default to a middle-register, slightly formal, moderately hedged tone that they apply uniformly throughout the text. The result reads like a Wikipedia article informative but generic, competent but characterless. It lacks the disciplinary voice that marks you as a member of your research community, and it lacks the tonal variation that signals your understanding of the conventions of each section.

For experienced reviewers and advisors, this tonal flatness is immediately apparent. Even without running the text through a detection tool, a reader with domain expertise can sense that something is off. The text is too smooth, too balanced, too uniformly competent. It lacks the fingerprints of a human writer who is wrestling with ideas, negotiating uncertainty, and making deliberate rhetorical choices.

The Real Cost of Using the Wrong Tool

The five failures described above are not merely quality issues. They are career-threatening risks that compound in ways many researchers do not anticipate until it is too late.

Rejected papers mean wasted months. If your manuscript is rejected because of AI detection flags, terminology errors, or citation problems introduced by a generic tool, you do not just lose the publication. You lose the months of research that went into it. You lose your place in the publication timeline. In fast-moving fields, a delay of even a few months can mean that another group publishes similar findings first, rendering your work less impactful or even redundant.

Detection flags trigger investigations. As we have discussed in other articles, an AI detection flag does not result in a quiet email. It triggers formal academic integrity proceedings that can freeze your degree progress, damage your relationship with your advisor, and create a permanent record that follows you throughout your career.

Reputation damage is cumulative and irreversible. In academia, reputation is everything. Your advisor's willingness to advocate for you, your reviewers' assessment of your work, your committee's confidence in your abilities all of these depend on your perceived integrity and competence. A single incident involving AI-generated content can erode trust that took years to build, and that trust may never fully recover.

"The time you think you are saving with ChatGPT is borrowed against your future. When the bill comes due in the form of a rejected paper, a formal investigation, or a damaged reputation the interest rate is devastating."

Career setbacks cascade. A rejected thesis chapter delays your defense. A delayed defense delays your graduation. A delayed graduation means you miss postdoc application deadlines. Missing postdoc deadlines means missing grant cycles. Missing grant cycles means falling behind peers who will compete with you for the same tenure-track positions. In the hypercompetitive landscape of academic careers, a single disruption at the wrong moment can alter your trajectory permanently.

The irony is that generic AI tools are meant to save time. But when the time saved in drafting is repaid many times over in corrections, investigations, revisions, and reputation repair, the net result is a massive time deficit plus psychological damage that no tool can repair.

What Makes ThesisHuman Different

ThesisHuman was built to address every failure mode that generic AI tools exhibit in academic writing. It is not a general-purpose writing assistant. It is a specialized academic text humanization engine designed by researchers, for researchers, with a singular focus: transforming AI-assisted academic text into prose that meets both human expert standards and algorithmic detection benchmarks.

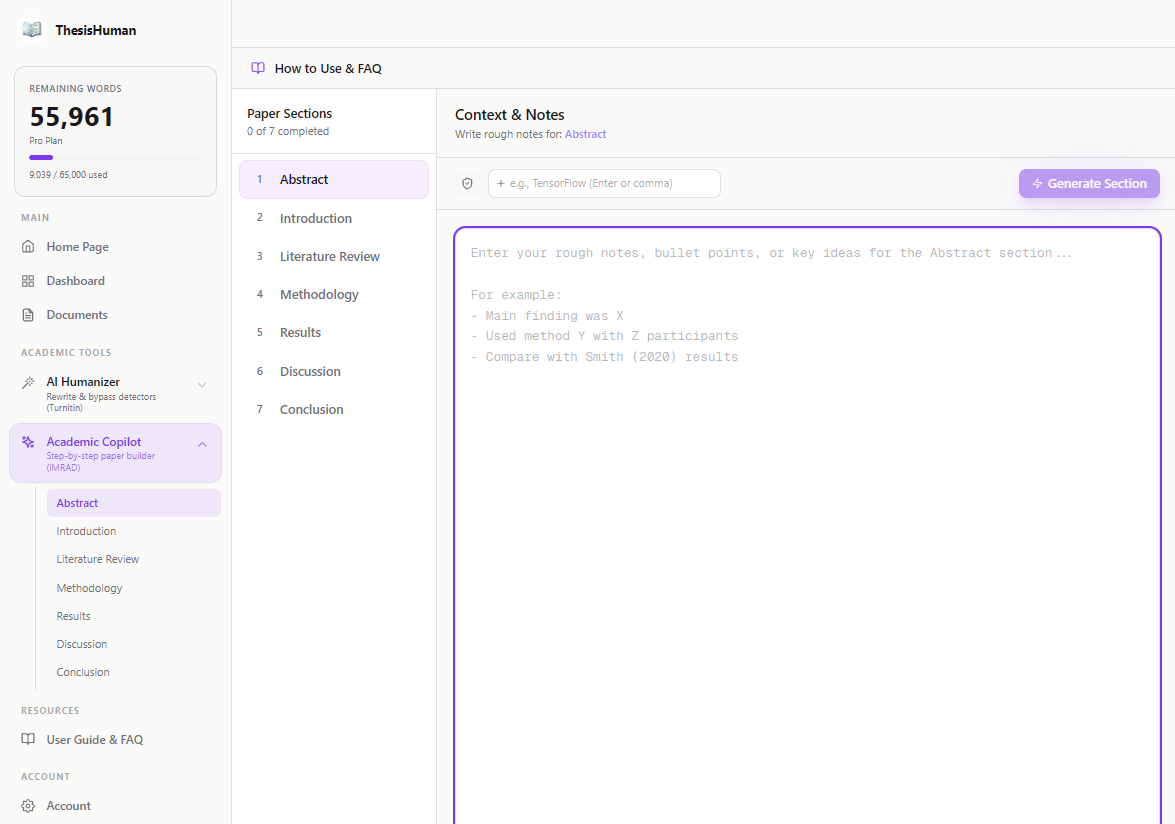

Where generic AI tools treat all text the same, ThesisHuman's IMRAD-aware processing recognizes the distinct rhetorical function of every section of your paper. It understands that your Introduction needs to build an argument, your Methods need to be replicable, your Results need to be direct, and your Discussion needs to be interpretive. Each section is processed according to its own conventions, preserving the structural integrity that defines quality academic writing.

Where generic tools destroy your terminology, ThesisHuman's Term Lock technology ensures that every technical term, every notation, every discipline-specific expression is preserved exactly as you wrote it. Your "allosteric modulation" stays "allosteric modulation." Your "Granger causality" stays "Granger causality." Your precision is your credibility, and ThesisHuman protects it absolutely.

Where generic tools corrupt your citations, ThesisHuman preserves your reference formatting, BibTeX keys, and citation structures completely. Your bibliography survives the humanization process intact, maintaining the scholarly infrastructure that connects your work to the literature.

And where generic tools produce text with a flat statistical signature that detectors flag instantly, ThesisHuman's proprietary burstiness engine restructures your text to align with the natural statistical properties of human academic writing. The result is text that meets the benchmarks used by Turnitin, GPTZero, Originality.ai, and other major detection platforms not because it fools them, but because it genuinely exhibits the statistical characteristics of human-authored prose.

ThesisHuman is continuously updated to stay ahead of evolving detection technology. The engine is recalibrated regularly against the latest versions of all major detection platforms, ensuring that your processed text continues to meet standards as the detection landscape changes. This ongoing maintenance is what separates a professional tool from a one-time hack and it is what gives thousands of researchers the confidence to submit their work without fear.

The platform processes your text in seconds, replacing the days or weeks of manual editing that cannot reliably achieve the same result. One click transforms your AI-assisted draft into submission-ready academic prose that preserves your technical content, respects your structural conventions, and naturally aligns with human-writing benchmarks.

Conclusion

Generic AI tools fail at academic writing in five fundamental ways: they ignore IMRAD structure, destroy terminology, corrupt citations, produce detectable statistical signatures, and flatten the tonal variation that characterizes expert scholarly prose. Each of these failures creates risk risk of rejection, risk of detection, risk of reputation damage, and risk of career-altering consequences.

The solution is not to avoid AI entirely that ship has sailed, and the productivity gains are too significant to ignore. The solution is to use the right tool for the job. Generic AI tools have their place, but that place is not in your academic writing workflow. Your research deserves a specialized engine that understands the demands of scholarly prose and the reality of the detection landscape.

ThesisHuman is that engine. Purpose-built for academic text. Continuously calibrated against detection algorithms. Trusted by thousands of researchers across every discipline. One click to transform your AI-assisted draft into prose that meets the highest standards of both human expertise and algorithmic evaluation.

Stop gambling with generic tools that put your career at risk. Your research is too important, your time is too valuable, and the consequences of getting it wrong are too severe. Try ThesisHuman today and give your academic writing the specialized treatment it deserves.